반응형

- 참고 : pytorch

전이학습(Transfer Learning) 튜토리얼

- 충분한 크기의 데이터셋을 갖추는 경우가 상대적으로 어려움

- 이를 해결하기 위해 전이학습 사용

- 2가지 전이학습 방법

- 합성곱 신경망의 미세조정 방법

- 무작위 초기화 대신에 미리 학습된 신경망을 가져와 초기화 후 학습

- 고정 특정 추출기로써의 합성곱 신경망

- 마지막의 완전히 연결된 계층을 제외한 모든 신경망의 가중치 고정

- 마지막 FC는 새로운 무작위의 가중치를 갖는 계층으로 대체

- 합성곱 신경망의 미세조정 방법

학습할 데이터 불러오기

- 75개의 검증용 이미지

- class는 ants, bees

# 학습을 위한 데이터 증가(Augmentation)와 일반화하기

# 단지 검증을 위한 일반화하기

data_transforms = {

'train': transforms.Compose([

transforms.RandomResizedCrop(224),

transforms.RandomHorizontalFlip(),

transforms.ToTensor(),

transforms.Normalize([0.485, 0.456, 0.406], [0.229, 0.224, 0.225])

]), # 해당 수치는 Imagenet에서 가장 좋았다는 정규화 수치

'val': transforms.Compose([

transforms.Resize(256),

transforms.CenterCrop(224),

transforms.ToTensor(),

transforms.Normalize([0.485, 0.456, 0.406], [0.229, 0.224, 0.225])

]),

}

data_dir = 'hymenoptera_data'

image_datasets = {x: datasets.ImageFolder(os.path.join(data_dir, x), data_transforms[x]) for x in ['train', 'val']}

dataloaders = {x: torch.utils.data.DataLoader(image_datasets[x], batch_size=4, shuffle=True, num_workers=4) for x in ['train', 'val']}

dataset_sizes = {x: len(image_datasets[x]) for x in ['train', 'val']}

class_names = image_datasets['train'].classes

use_gpu = torch.cuda.is_available()

데이터 시각화

def imshow(inp, title=None):

"""Imshow for Tensor."""

inp = inp.numpy().transpose((1, 2, 0))

mean = np.array([0.485, 0.456, 0.406])

std = np.array([0.229, 0.224, 0.225])

inp = std * inp + mean

inp = np.clip(inp, 0, 1)

plt.imshow(inp)

if title is not None:

plt.title(title)

plt.pause(0.001) # pause a bit so that plots are updated

# Get a batch of training data

inputs, classes = next(iter(dataloaders['train']))

# Make a grid from batch

out = torchvision.utils.make_grid(inputs)

imshow(out, title=[class_names[x] for x in classes])

모델 학습 함수

def train_model(model, criterion, optimizer, scheduler, num_epochs=25):

since = time.time()

best_model_wts = copy.deepcopy(model.state_dict())

best_acc = 0.0

for epoch in range(num_epochs):

print('Epoch {}/{}'.format(epoch, num_epochs - 1))

print('-' * 10)

# Each epoch has a training and validation phase

for phase in ['train', 'val']:

if phase == 'train':

scheduler.step()

model.train(True) # Set model to training mode

else:

model.train(False) # Set model to evaluate mode

running_loss = 0.0

running_corrects = 0

# Iterate over data.

for data in dataloaders[phase]:

# get the inputs

inputs, labels = data

# wrap them in Variable

if use_gpu:

inputs = Variable(inputs.cuda())

labels = Variable(labels.cuda())

else:

inputs, labels = Variable(inputs), Variable(labels)

# zero the parameter gradients

optimizer.zero_grad()

# forward

outputs = model(inputs)

_, preds = torch.max(outputs.data, 1)

loss = criterion(outputs, labels)

# backward + optimize only if in training phase

if phase == 'train':

loss.backward()

optimizer.step()

# statistics

running_loss += loss.item() * inputs.size(0)

running_corrects += torch.sum(preds == labels.data)

epoch_loss = running_loss / dataset_sizes[phase]

epoch_acc = running_corrects / dataset_sizes[phase]

print('{} Loss: {:.4f} Acc: {:.4f}'.format(

phase, epoch_loss, epoch_acc))

# deep copy the model

if phase == 'val' and epoch_acc > best_acc:

best_acc = epoch_acc

best_model_wts = copy.deepcopy(model.state_dict())

print()

time_elapsed = time.time() - since

print('Training complete in {:.0f}m {:.0f}s'.format(

time_elapsed // 60, time_elapsed % 60))

print('Best val Acc: {:4f}'.format(best_acc))

# load best model weights

model.load_state_dict(best_model_wts)

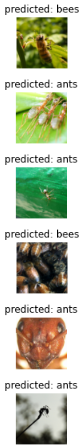

return model- 학습 예측값 시각화 함수

def visualize_model(model, num_images=6):

was_training = model.training

model.eval()

images_so_far = 0

fig = plt.figure()

for i, data in enumerate(dataloaders['val']):

inputs, labels = data

if use_gpu:

inputs, labels = Variable(inputs.cuda()), Variable(labels.cuda())

else:

inputs, labels = Variable(inputs), Variable(labels)

outputs = model(inputs)

_, preds = torch.max(outputs.data, 1)

for j in range(inputs.size()[0]):

images_so_far += 1

ax = plt.subplot(num_images//2, 2, images_so_far)

ax.axis('off')

ax.set_title('predicted: {}'.format(class_names[preds[j]]))

imshow(inputs.cpu().data[j])

if images_so_far == num_images:

model.train(mode=was_training)

return

model.train(mode=was_training)

합성곱 신경망 미세조정(Finetuning)

- 미리 학습한 모델을 불러와서 마지막 FC만 재설정

model_ft = models.resnet18(pretrained=True)

num_ftrs = model_ft.fc.in_features

model_ft.fc = nn.Linear(num_ftrs, 2)

if use_gpu:

model_ft = model_ft.cuda()

criterion = nn.CrossEntropyLoss()

# Observe that all parameters are being optimized

optimizer_ft = optim.SGD(model_ft.parameters(), lr=0.001, momentum=0.9)

# Decay LR by a factor of 0.1 every 7 epochs

exp_lr_scheduler = lr_scheduler.StepLR(optimizer_ft, step_size=7, gamma=0.1)

model_ft = train_model(model_ft, criterion, optimizer_ft, exp_lr_scheduler, num_epochs=25)

>>> Best val Acc: 0.947712

고정 특정 추출기로써의 합성곱 신경망

- 마지막 FC 계층을 제외한 나머지 모든 레이어 고정

- 경사도 계산 수행하지 않도록 설정

- 시간절약(대부분의 레이어에서 경사도 계산X, 순전파는 계산)

model_conv = torchvision.models.resnet18(pretrained=True)

for param in model_conv.parameters():

param.requires_grad = False

# Parameters of newly constructed modules have requires_grad=True by default

num_ftrs = model_conv.fc.in_features

model_conv.fc = nn.Linear(num_ftrs, 2)

if use_gpu:

model_conv = model_conv.cuda()

criterion = nn.CrossEntropyLoss()

# Observe that only parameters of final layer are being optimized as

# opoosed to before.

optimizer_conv = optim.SGD(model_conv.fc.parameters(), lr=0.001, momentum=0.9)

# Decay LR by a factor of 0.1 every 7 epochs

exp_lr_scheduler = lr_scheduler.StepLR(optimizer_conv, step_size=7, gamma=0.1)

model_conv = train_model(model_conv, criterion, optimizer_conv,

exp_lr_scheduler, num_epochs=25)

>>> Best val Acc: 0.967320

반응형

'Study > Self Education' 카테고리의 다른 글

| Pytorch 기본기 - 5 (0) | 2024.07.02 |

|---|---|

| Pytorch 기본기 - 3 (0) | 2024.07.02 |

| Pytorch 기본기 - 2 (0) | 2024.07.02 |

| Pytorch 기본기 - 1 (0) | 2024.07.02 |

| 핸즈온 머신러닝 - 14 (0) | 2024.06.19 |